The first major supply chain attack targeting autonomous AI agents is here – and most teams aren’t prepared for what it reveals.

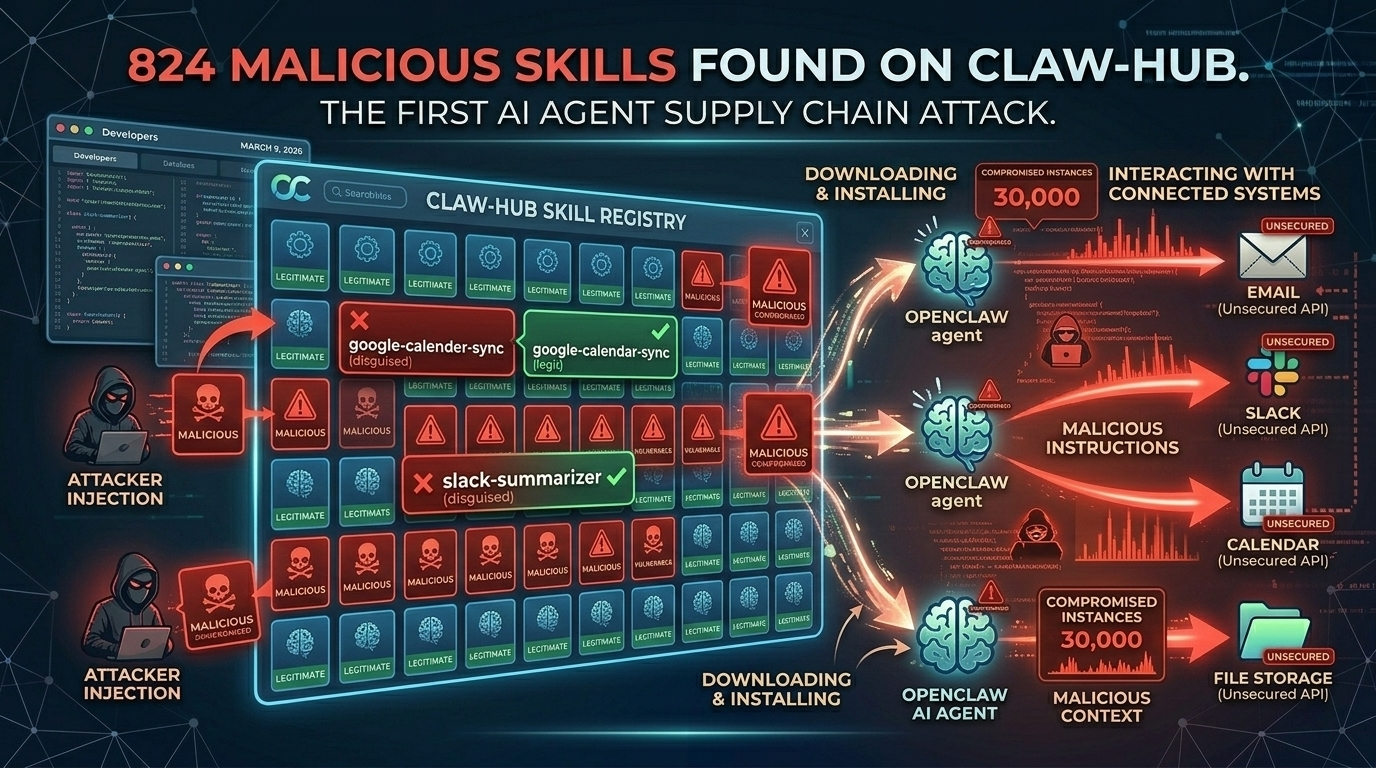

Twenty percent. That’s the share of ClawHub’s skill registry that researchers flagged as malicious during the ClawHavoc campaign investigation earlier this year. Koi Security’s initial audit identified 341 compromised skills. Bitdefender’s broader sweep pushed the count closer to 900. The final consensus settled around 824 confirmed malicious packages sitting in the most popular distribution channel for OpenClaw, the open-source AI agent framework with 230,000+ GitHub stars.

If you work in application security, this should feel familiar. It’s the npm/PyPI supply chain attack playbook – typosquatting, dependency confusion, obfuscated payloads – ported to a system where the compromised code doesn’t just run in a container. It runs with access to your email, your calendar, your Slack workspace, and in many cases, your file system.

That’s a fundamentally different blast radius.

This Isn’t a Package Manager Problem. It’s an Agent Permissions Problem.

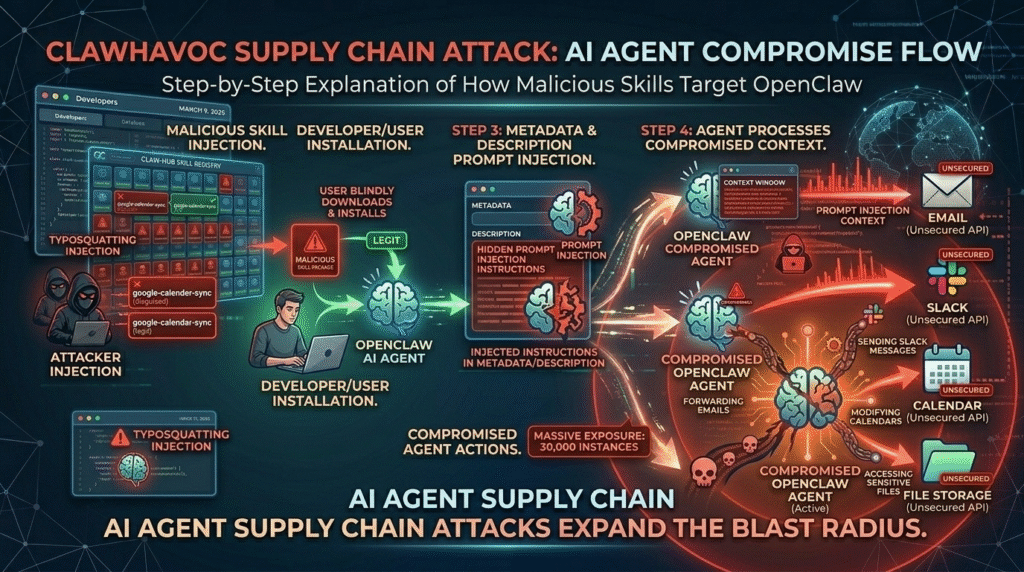

Traditional supply chain attacks are dangerous because malicious code executes in your CI pipeline or production server. The ClawHavoc campaign is worse because OpenClaw skills execute with the agent’s full permission set – which, for most deployments, means whatever the user granted at setup.

Stay with me here.

When you install a compromised npm package, the damage is bounded by the Node.js process’s permissions. When you install a compromised OpenClaw skill, the damage is bounded by what you told your agent it could do. And most people told their agent it could do everything.

The ClawHavoc attackers understood this perfectly. Researchers found that the malicious skills weren’t just exfiltrating environment variables or API keys. They were instructing agents to forward emails to external addresses, modify calendar entries to create phishing opportunities, and – in at least one documented case – use the agent’s Slack integration to send messages impersonating the user.

This is social engineering at machine speed, delivered through a supply chain nobody was auditing.

How ClawHavoc Actually Worked

The attack methodology was surprisingly straightforward. Attackers published skills to ClawHub with names mimicking popular legitimate skills – google-calender-sync instead of google-calendar-sync, slack-summariser instead of slack-summarizer. Classic typosquatting.

But the payload delivery was novel. Instead of embedding malicious code directly, many skills contained prompt injection strings hidden in their description metadata. When OpenClaw’s agent loaded the skill, it processed those descriptions as part of its context window, effectively receiving new instructions from the attacker.

Here’s the part nobody mentions.

ClawHub had no automated security scanning at the time of the campaign. No signature verification. No sandboxed execution for skill testing. No review process whatsoever. Anybody with a GitHub account could publish a skill that 230,000+ potential users might install.

For teams now re-evaluating how they deploy OpenClaw agents, managed infrastructure providers have started addressing this gap directly. A detailed breakdown of OpenClaw security vulnerabilities and how managed hosting mitigates them shows how sandboxed execution environments and curated skill registries can isolate the blast radius of compromised components – a model worth studying regardless of which platform you choose.

The CVE That Made It Worse

ClawHavoc didn’t happen in isolation. Just weeks before, CVE-2026-25253 disclosed a one-click remote code execution vulnerability in OpenClaw’s core. The combination was devastating: a framework-level RCE bug plus a compromised skill registry meant attackers had multiple entry points into any unpatched deployment.

Security researchers subsequently discovered over 30,000 internet-exposed OpenClaw instances running without authentication. Many of these were hobbyist deployments on cheap VPS providers – spun up from blog tutorials that never mentioned security configuration.

This mirrors what happened with exposed Elasticsearch and MongoDB instances years ago. The difference is that an unsecured database leaks data. An unsecured autonomous agent acts on an attacker’s behalf.

Meta apparently agreed with that risk assessment. The company internally banned OpenClaw on work devices after researcher Summer Yue’s agent deleted her emails while ignoring stop commands. Employees now face termination for installing it.

See also: The Future of Tech: Top Gadgets and Trends for Geek Enthusiasts in 2025

What Your Security Team Should Be Doing Now

If your organization uses OpenClaw – or if developers are running it on company-adjacent systems – here’s what matters immediately.

Audit your skill installations. Check every skill your agents have loaded against the known ClawHavoc indicators of compromise. Remove anything you can’t verify against a known-good source.

Treat skills like dependencies, not plugins. Your AppSec team already has processes for vetting third-party packages. Extend those processes to OpenClaw skills. Pin versions. Review source code. Maintain an internal allowlist.

Enforce least-privilege agent permissions. The single biggest factor in ClawHavoc’s impact was over-permissioned agents. An agent that can read your email but not send it limits the blast radius dramatically. Review every permission grant.

Consider your deployment model. Self-hosted OpenClaw on a bare VPS gives you full control but also full responsibility – including Docker configuration, network isolation, and credential management. BetterClaw’s managed OpenClaw infrastructure and competitors like xCloud or ClawHosted handle security controls at the deployment layer, so your team can focus on application-layer decisions. The right choice depends on your team’s security engineering capacity.

Monitor agent behavior in production. Static analysis of skills isn’t enough when payloads use prompt injection rather than traditional code execution. You need runtime monitoring that flags anomalous agent actions – unexpected API calls, unusual data access patterns, communication with unrecognized external endpoints.

The Supply Chain Problem Is Going to Get Worse Before It Gets Better

ClawHavoc is the first major AI agent supply chain attack. It won’t be the last.

The economics are too attractive. A single malicious skill, installed by thousands of users, gives an attacker access not just to compute resources but to human-equivalent actions – sending messages, modifying documents, making purchases, writing code. The ROI for attackers dwarfs traditional supply chain compromises.

The OpenClaw community has responded. ClawHub now requires verified publisher badges for skills in certain categories, and the project’s move to an OpenAI-sponsored foundation should bring more resources to security auditing. But community-driven registries face the same fundamental tension that npm, PyPI, and Docker Hub have never fully resolved: openness and security pull in opposite directions.

For organizations weighing self-hosted versus managed approaches, a direct comparison of self-hosted OpenClaw against managed deployment platforms can help clarify where the security tradeoffs actually land – though DigitalOcean droplets, Elestio, and xCloud are also worth evaluating depending on your requirements.

But here’s the broader point. The lesson from ClawHavoc isn’t specific to OpenClaw. It’s that autonomous AI agents represent a new category of supply chain risk that existing AppSec frameworks weren’t designed for. Your SBOM doesn’t include prompt injection vectors. Your SAST tools don’t scan skill metadata for hidden instructions. Your threat models probably don’t account for an attacker who can impersonate a user through their own AI assistant.

The organizations that navigate this well will be the ones that start treating agent deployments with the same rigor they apply to production services – because that’s exactly what they are.